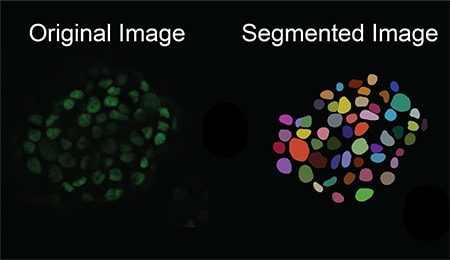

Observing individual cells through microscopes can reveal a range of important cell biological phenomena that frequently play a role in human diseases, but the process of distinguishing single cells from each other and their background is extremely time consuming — and a task that is well-suited for AI assistance.

AI models learn how to carry out such tasks by using a set of data that are annotated by humans, but the process of distinguishing cells from their background, called “single-cell segmentation,” is both time-consuming and laborious. As a result, there are limited amounts of annotated data to use in AI training sets. UC Santa Cruz researchers have developed a method to solve this by building a microscopy image generation AI model to create realistic images of single cells, which are then used as “synthetic data” to train an AI model to better carry out single cell-segmentation.

The software is described in a new paper published in the journal iScience. The project was led by Assistant Professor of Biomolecular Engineering Ali Shariati and his graduate student Abolfazl Zargari. The model, called cGAN-Seg, is freely available on GitHub.

Here is an exclusive Tech Briefs interview, edited for length and clarity, with Shariati.

Tech Briefs: What was the biggest technical challenge you faced while developing this image-to-image generative AI model?

Shariati: The biggest technical challenge that we needed to overcome was to generate images that are quite close to what we see as a cell. Compared to other image-to-image models, one difference is that cells are shape-changing objects. They show a lot of variation in their morphology, and each has a unique appearance. That required us to modify the existing image-to-image model to make the images look like what we get from a microscopy machine. It’s just the nature of the biology of the cells that they change their shapes — show morphological variations. Then we needed to modify the structure of the existing networks to make sure that what we get is not too far from what the microscopy machine generates.

Tech Briefs: What was the catalyst for your work? How did this project come about?

Shariati: If you want to do microscopy, traditionally you take images from the microscopes and you see cells in them. Then, how do we know where the cells are? How can we identify the boundaries of the cells? And are they different sizes? Obviously, doing this manually could be very labor intensive and time consuming. But, in the past few years, AI models have transformed the way we can quickly identify the boundaries of a cell; but for all AI models to work, you need to first generate a set of annotated, labeled images of a cell.

That means you need experts — humans — to tell the model, ‘This is a cell, this is not a cell, this is …’ That process itself is quite time consuming. And then we have a variety of cell types, thousands of different types of cells. So, to make a model that can recognize all of these you need that type of annotated dataset; it doesn’t exist for microscopy images. It may exist for other imaging scenarios, but for us, it doesn't exist. So, this model has exactly that limited annotated data for microscopy images. Because this model will generate images that are labeled and annotated, you can use it to train your model immediately.

Tech Briefs: The article I read says, ‘In the future, the researchers hope to use the technology they have developed to move beyond still images to generate videos, which can help them pinpoint which factors influence the fate of a cell early in its life and predict their future.’ How is that coming along? Do you have any updates?

Shariati: Going from a snapshot to a sequence of images requires some modifications for knowing how the cells are moving. One unique feature of the cells, which you don't find in non-living systems, is that cells divide. If, all of a sudden, you have an object that becomes two, that's another future for cells. They may move, they may divide. So there are a couple of obstacles we are overcoming. But I think the initial results are pretty promising. We are able to generate videos of a cell for a few seconds. The input of this model is a snapshot — one frame — that will tell us the future of the cell; then we can compare it with the ground truth to see that if our model predicted the cell is going to divide like ours, did that cell actually divide like ours and how far we are from the reality of the video that we see.

Tech Briefs: You’re quoted in the article as saying, ‘We want to see if we are able to predict the future states of a cell, like if the cell is going to grow, migrate, differentiate, or divide.’ Are there any updates on that front?

Shariati: It's pretty similar to the last question of how accurate we are with our predictions. I think the movies we are generating look, by some metrics of similarity, to be not too far from the real movies. So, we see good similarity scores; but to what extent our predictions are accurate, I think we need to wait for that.

Tech Briefs: What are your next steps? Do you have any plans for further research or work?

Shariati: I think the overall goal is the harmonization of the models that are used to analyze microscopy images. At the moment, everybody has a set of tools that they use. So, we are trying work together with others to have a set of tools that are a standard way of producing a microscopy image. It will give you a report of what is in the image — what are the cell types, are they cancerous cells, are they normal cells, and so on.

Also, maybe we can use some of the more recent advancements in the AI field to make the conversation between a microscope and humans a little bit easier.

Tech Briefs: Do you have any advice for engineers or researchers aiming to bring their ideas to fruition, broadly speaking?

Shariati: I would say that identifying the right problem and knowing the bottleneck in that area is the key. Knowing what exactly is needed so that you make something people actually need and not something that is just based on your own curiosity. So, identifying the bottleneck could be a good way of engineering new software or new hardware.