Factories of all sizes are incorporating automation at ever increasing rates. Among the reasons for that are reshoring, the idea that automating factories is a way of lowering labor costs for U.S. manufacturing so that domestic manufacturing becomes more cost-effective when you compare it to the costs of offshoring. You can take advantage of the much lower labor rates in many countries, but you have to add in the costs of more complex management and logistics, as well as the costs of shipping.

And then there’s the U.S. labor shortage, the difficulty of finding enough people who are willing to work in factories, while at the same time, a significant portion of the existing workforce is aging toward retirement.

And of course, the increasing productivity gains due to automation are good for the bottom line. Automation not only reduces the costs of production in the long run, but it also helps maintain reliable high-quality results and consistently predictable time frames.

The downside of automating an existing factory is the initial investment, not just in dollars, but also in the necessary down time for making such basic changes. However, most factories already have some automated processes using PLCs and other industrial controllers, generally running independently of each other, so that’s a head start. The next step is to integrate all of that into a single network — the Industrial Internet of Things (IIoT).

Ideally the factory network should also connect with the office network, to enable management to make more informed decisions. And it should enable connecting to the cloud for complicated analytics and large data storage — as well to the internet for connectivity beyond the factory.

Why Wireless?

To begin with, installing a wireless network is much less expensive. The costs in labor, materials, and downtime, for wiring a factory are far greater than for setting up a wireless system. And once in place, a wireless network is much more flexible. As processes change or new equipment is added, it is relatively simple to add or reprogram sensor nodes. Also, wireless sensors can be installed in locations that would be difficult to reach with cabling, for example on rotating machinery.

Designing the System

One of the main challenges with setting up wireless sensors, is powering them. Even if you use some sort of power harvesting, you still need power storage, usually with batteries. If the batteries have to be changed often, wireless is a non-starter, so keeping power low is of primary importance.

One strategy for keeping sensor power low is to reduce the amount of data transmitted from each sensor because streaming data uses a relatively large amount of power. The trouble is that once an IIoT network has been installed, the maximum benefit comes from obtaining as much data as possible and sending it to a local server or to a cloud data center for analysis. But in general, only a small percentage of the data is relevant. External analytic data-crunching can sort the wheat from the chaff, but the size of the data stream and the amount of computing can overwhelm systems. A solution is to do preprocessing at the “edge” — right at the sensor — to determine what data is significant and only send that.

Low Power Edge Processing

But for edge processing to provide a net improvement in power reduction, the processing itself has to be done at low power. On that subject, I had a discussion with Nandan Nayampally, Chief Marketing Officer of BrainChip, makers of the Akida IP platform, a neural processor designed to provide ultra-low-power edge AI sensor network preprocessing. It starts with fully digital neuromorphic event-based AI and can learn on the device to trigger outputs only when there is significant information. It has its own memory that it uses to analyze the data, thus avoiding the energy-intensive transfer of data back and forth to utilize remote data storage. It also significantly reduces the amount of bandwidth required for the processing.

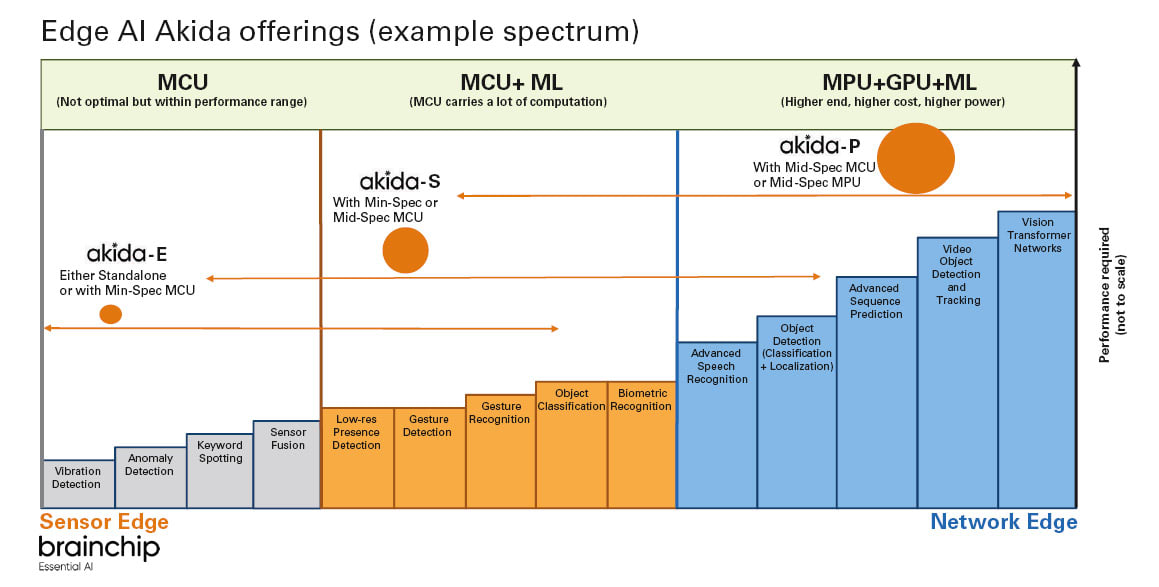

According to Nayampally, the series is focused on three general configurations (See Figure 2). The Akida-E is the most basic of the solutions, dealing with sensor inputs like vibration detection, anomaly detection, keyword spotting, and sensor fusion. Akida-S is more mid-range. It can do microcontroller (MCU)-level machine learning for more complex tasks such as presence detection, object classification, and biometric recognition. Finally, on the right-hand side of the figure, the Akida-P can perform higher-level tasks using a microprocessor (MPU) for tasks like advanced object detection or sequence prediction.

Wi-Fi Networking

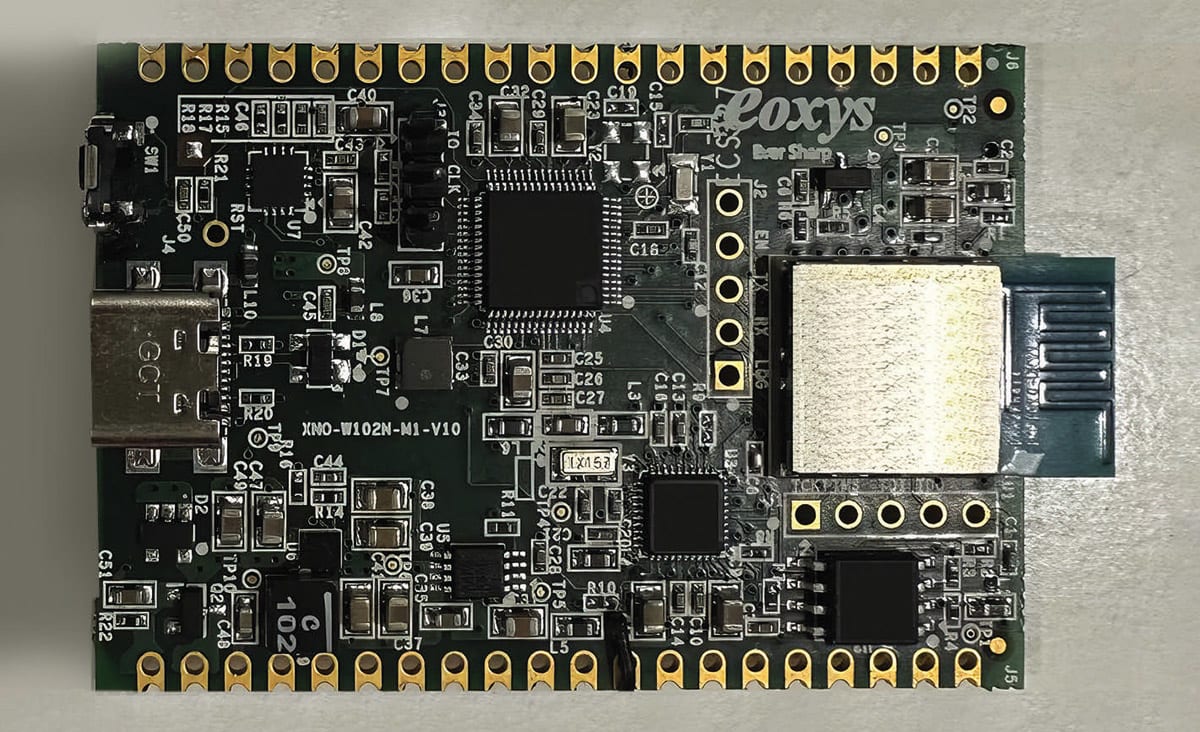

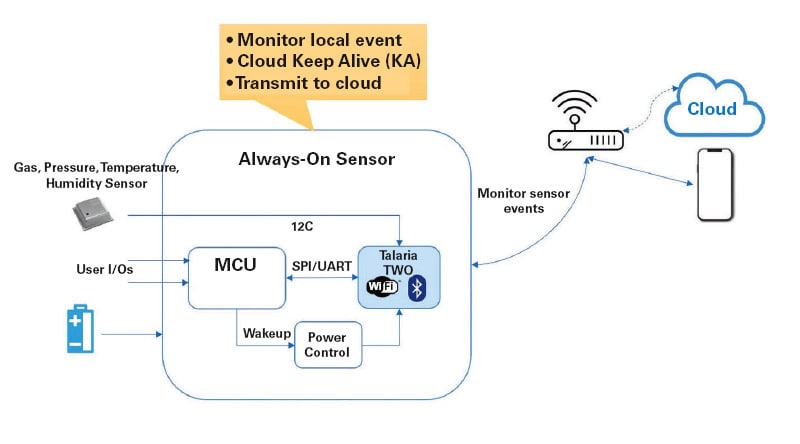

While the Brainchip solutions save power by doing advanced AI processing at the edge, InnoPhase IoT, Inc. focuses on the network architecture. Their Talaria Two Wi-Fi and BLE System on Chip (SoC) and module enables sensors to be networked via Wi-Fi. According to Deepal Mehta, InnoPhase IoT Senior Director of Business Development, since Wi-Fi enabled IoT end points use TCP/IP connectivity, they can communicate directly to the cloud without any need for intervening gateways. The chip also includes a Bluetooth Low Energy (BLE) gateway that can be used in two different ways. It enables legacy BLE connected devices to connect to the Wi-Fi network and facilitates provisioning the Wi-Fi enabled end points using an app on a cell phone.

In addition to saving power by eliminating gateways, they use a low-power radio to transmit the Wi-Fi signal. Key to the low power radio is their method of digitally encoding and decoding the RF waveform. This method, they call PolaRFusion™, is distinguished from other techniques that use higher power-consuming analog processing.

Use Case

A typical simple use case is temperature sensing. If a sensor mounted on a machine shows a trending rise in temperature, that could be an indication that there is a problem. However, instead of sending all the temperature data all the time, a local neural processor like BrainChip’s Akida can actively learn the standard temperature envelope for a particular installation and set an alarm level based on that. Then only the alarm needs to be transmitted, possibly via InnoPhase Wi-Fi, to the local server or to the cloud. Alternatively, once the alarm level has been reached, the continuous stream of temperature data can then be transmitted.

If we now consider a series of temperature sensors mounted on different assets. Each of those pieces of equipment might have different standard operating temperatures. And even identical assets located in different places in the building may have variations based on draft air, sunlight, or other factors. So, being able to use AI analytics separately at each sensor and setting different alarm levels will be extremely efficient. The value added to the manufacturing process will be multiplied if you use the same approach for other data such as vibration, pressure, levels, and flows. And even more, if in addition to all the sensor data, you can track products and processes using cameras and then analyze that information using the video analytics that can be performed with the Akida platform.

The Bottom Line

The industrial internet of things — sharing, collecting, and analyzing information across a complete manufacturing enterprise — can significantly enhance the bottom line. Not only in monetary terms but also in the quality and reliability of the products and the ability to deliver them on time.

This article was written by Ed Brown, editor of Sensor Technology. For more information, go to www.brainchip.com and www.innophaseiot.com .