Fuel economy is a key factor in worldwide energy-consumption. One way to improve fuel economy is using driver feedback to promote efficient habits (eco-driving). Emerging research is looking into using Advanced Driver-Assistance Systems (ADAS) as a tool for promoting eco-driving.

For fixed speeds, fuel economy can also be enhanced by improving powertrain efficiency through optimizing the use of engine and battery power; termed Optimal Energy Management Strategy (Optimal EMS). Optimal EMS functions by controlling engine power. According to recent research, the largest improvements are possible when eco-driving and an Optimal EMS are combined.

The advanced driver-assistance systems (ADAS) now used for active safety use include sensors such as light detection and ranging (LIDAR), radio detection and ranging (RADAR), ultrasonic sensors, and various types of imaging systems.

Computer vision to identify the surrounding environment and the classification of objects in a video is a significant area of research in ADAS. The use of a camera is one of the easier methods to determine the type of object that the vehicle is approaching. The general flow for this object detection includes image acquisition, pre-processing, segmentation, object detection and tracking, depth estimation, and system control. To more reliably accomplish the task of object detection, recent approaches are exploring deep learning algorithms such as convolutional neural networks (CNN) for greater accuracy.

Data Acquisition

Two routes were chosen to test different conditions: a highway drive cycle, and a city drive cycle. Four test runs were driven for each cycle.

Sensors were used in a test vehicle to determine vehicle speed and acceleration, along with a video feed of the driving environment.

A ZED stereo vision camera was used to obtain video data for post-processing. The camera was placed at the top of the windshield near the rearview mirror, minimizing the effect of glare and maximizing lane line, sign, and vehicle visibility. The downward camera angle reduced the effects of lighting conditions because the lens wasn't overexposed from the sun and still had a full view of the vehicle environment.

ADAS Information for Optimal EMS

Traditional ADAS tracks and utilizes data that would allow a vehicle to drive more safely. Such data includes details of vehicle location, distance of objects to the driver, lane detection, etc. However, for Optimal EMS prediction, only the data that would directly affect vehicle speed was used. As an example, if it is known that the vehicle/driver is going to slow down for a while, then the Optimal EMS may elect to turn off the engine to reduce fuel consumption.

The data can also help identify situations where a vehicle may need to slow down. Traffic light state and stop sign state were chosen because of their roles in regulating speed. For traffic lights, red, yellow, green, and N/A (not available) are states that were deemed to be relevant prediction data. Stop signs define an upcoming event that would slow the vehicle. However, detecting a stop sign nearby when turning onto a street is different from finding a stop sign down the street, thus requiring a different calculation with each stop sign.

In most cases, the only vehicle that affects the driver's speed is the one directly ahead. In situations where a car in an adjacent lane merges nearby, it will be tracked once it's in the main lane. Only the scenarios where the vehicle's spatial relationship to the vehicles ahead of it changes, are considered. The output states for the vehicle in-front state data are defined as increasing, decreasing, and same regarding distance and N/A for no vehicles in the current lane.

An upcoming turn usually indicates a decrease in speed. Significant bends in the road or turning 90° warrant a reduction of speed, making the turning feature a necessary one for Optimal EMS prediction.

Ground Truth Development

The data required from an ADAS system has to provide as close to perfect predictions as possible. This is called ground truth data and is obtained by having a human instead of a computer algorithm closely analyze the environment. Each prediction feature requires human-annotated data for all eight videos at a data rate of 1-Hz. This data is collected to show how an Optimal EMS prediction would fare with completely accurate ADAS data.

ADAS Detection Development

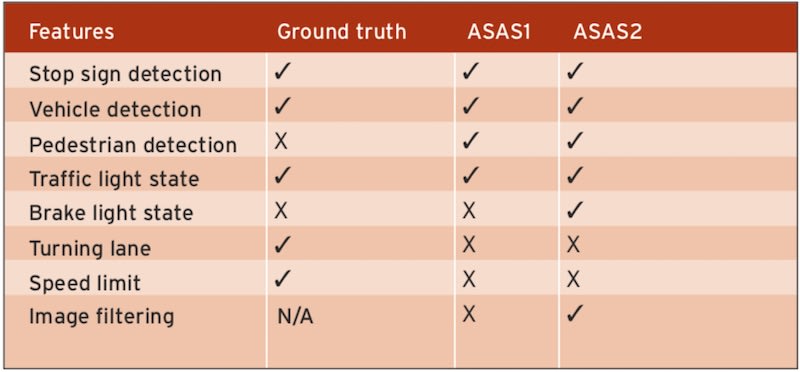

A combination of custom and known algorithms, called ADAS1 and ADAS2, was developed to automatically capture the ADAS EMS prediction features. Only ADAS information that could be obtained from a stereo vision camera was obtained. The features of ADAS1 and 2 and the ground truth selection are shown in the table below.

The eight drive-cycle videos were analyzed by the computer vision algorithms to generate the EMS prediction data. Every 30th frame (for a 30-fps video capture rate) was read for output as a simplification to reduce the need for extensive computational overhead. Various pre-processing steps were applied to the frame image before sending it to the object detection and tracking algorithms.

Read Frame: Every frame was obtained from the ZED camera, which has a 110° wide-angle lens at an aspect ratio of 16:9 and a frame resolution of 1280 × 720, captured at 30 fps. It recorded the left and right frame as a single image; therefore, a separation step was required for the left and right frames to be split into separate image arrays. The left frame array was used for object detection and tracking, with the right frame being ignored, to reduce computational overheads.

Pre-processing: The second step was to crop the image to reduce the number of computations required. Only the driving and adjacent lanes were included as well as enough height to see the traffic lights. Another step was converting to different color spaces, for example, grayscale. In ADAS2 a Gaussian blur filter was used to prepare several frames for pixel brightness detection in order to make the image more uniform.

Object Detection: In order to detect objects such as stop signs that would predict a possible speed change, an image frame is sent to the Convolutional Neural Network (CNN) layer, which returns a list of objects detected with a given confidence score.

The CNN output layer indicated vehicle detection with the help of bounding boxes around those vehicles. Vehicles detected in the image were considered only if found in the driver's lane. The detected vehicle was found and compared to the width of the lane. If found in the range, the object's bounding box was tracked. If the bounding box object was larger in the next frame, it was assumed that the vehicle is approaching. If the bounding box was the same size or smaller, the vehicle is considered to have remained at the same distance. The bounding box area method worked adequately because an exact distance is not needed to detect relative vehicle movement across frames.

Traffic light state detection was done similarly to vehicle detection with the addition of an extra processing step. A list of bounding boxes from the CNN for traffic lights was iterated and filtered based on the confidence score, to select the one traffic light for object state classification that had the highest confidence. Object filtering was performed to eliminate the need to find the state of each traffic light. Stop sign and pedestrian detection were added if their confidence score was higher than 30.

Object State Classification: Traffic lights and vehicle brake lights required object state classification to determine what state the traffic light indicated and if the brake lights were on. To accomplish this, the bounding box information of the objects was taken, and a sub-image was created based on the size of the bounding boxes. Algorithms then determined the color states of these sub-images. (Figures 1 – 3)

Baseline Energy Management Strategy Simulation

A 2010 Toyota Prius was selected as the vehicle model due to its commercial prevalence and because it has the best fuel economy in its class. The Autonomie modeling software used demonstrated strong correlation with real-world testing.

When the numbers from real-world measured values were compared to the simulation values, the simulation fuel economy was within 3% of all of the physically measured fuel economy numbers and the baseline EMS was considered validated.

Optimal Energy Management System Derivation

Vehicle Operation Prediction: Model ADAS data, along with current GPS location and current vehicle velocity, was used to predict future vehicle operation within the perception subsystem. An artificial neural network combined the outputs from sensors and signals to generate a vehicle velocity prediction.

Planning Subsystem Model: This vehicle operation prediction was then used in an optimal control algorithm that uses dynamic programming to derive the globally Optimal EMS for the given prediction. This optimal control is then issued as a control request to the vehicle's “running controller,” which evaluates component limitations before actuating the vehicle plant. The process of perception, planning, and vehicle actuation uses second-by-second feedback with the Optimal EMS, computed for 15-second predictions.

Vehicle Subsystem Model: This inputs to the vehicle model are the Optimal EMS control requests and disturbances attributed to misprediction. Of particular interest is the fuel consumption, achieved engine power, and battery state of charge. These results can then be compared to the outputs from baseline EMS simulation.

Results

ADAS Output Comparison:

For the vehicle-in-front state, the average accuracy was around 60% for ADAS1 and 70% for ADAS2. The ADAS2 accuracy was better due to the different weight and configuration files used for detection. However, the CNN in both ADAS1 and ADAS2 failed to identify objects at distances farther than 20m. The ground truth data labeled by hand identified vehicles at a much more significant range.

For traffic lights, the average accuracy for the highway drive cycle videos was around 70%, while the average accuracy for the city drive cycle videos was 40%, as shown in Figure 4. The inaccuracies were due to the changing ambient lighting and diverse positioning of the traffic light. Many frames of the traffic light were blocked by cars and were detected for ground truth at a much higher distance. Most traffic lights — being smaller than vehicles — only provided positive readings when the vehicle was within 5 meters. The accuracy of detection of stop light colors was also challenging to get correct. Relying on the average color of the pixels was found to be an ineffective predictor for these lights.

Fuel Economy Improvements with ADAS:

ADAS detection Optimal EMS fuel economy improvements must be assessed with respect to the globally Optimal EMS fuel economy improvements. Globally Optimal EMS fuel economy improvement is possible when the entire drive cycle is predicted 100% accurately from time zero. This serves as a reference point to understand the scope of the improvement that can be realized through ADAS detection. A new metric called “prediction window optimal,” was also defined: the optimal prediction of a 15-second window used for ADAS1 and ADAS2.

Figure 5 shows the comparison for city-focused drive cycle between the globally Optimal EMS strategy, the two ADAS strategies (ADAS1, ADAS2), and the ground truth ADAS strategy. All results are presented relative to the baseline control strategy for the 2010 Toyota Prius. The globally Optimal EMS fuel economy improvement is 19.6%, which represents an upper bound. The prediction window optimal fuel economy improvement is 11.9%. With ground truth ADAS detection, approximately half of this amount is realized at 6.1%, which is a very promising result.

Using the actual algorithms, a 4.4% improvement was realized with ADAS1, and 5.3% with ADAS2. The difference between the ground truth ADAS and results from the actual ADAS deployments was due to the inaccuracies and limitations with the real-time computer vision algorithms. The failure to detect an object at great distances reduced the accuracy in many cases. Another source of error was with the traffic light state detection. There were also several difficulties in producing accurate results in time to make an impact on fuel economy. ADAS2 gave a slightly higher percentage improvement than ADAS1, primarily due to the different set of weighting and training parameters and the addition of vehicle brake light data.

Conclusions

Overall, these promising results suggest that modern commercially available ADAS technology could be repurposed to implement an Optimal EMS. As new sensing capabilities become commercially available, the fuel economy improvements possible through an Optimal EMS may start to approach the global Optimal EMS results.

This article was adapted from SAE Technical Paper 2018-010593, written by Jordan A. Tunnell, Zachary D. Asher, Sudeep Pasricha, Thomas H. Bradley, Colorado State University. To obtain the full technical paper and access more than 200,000 resources for the aerospace, automotive, and commercial vehicle industries, visit the SAE MOBILUS website here .