For centuries, predicting the future has been one of the obsessions of humankind, and understandably so. Knowing what will happen gives us a sense of control and security that heavily influences the choices we make, from what we’ll wear, to where we’ll invest and what we’ll do. Over decades, we’ve developed technology that can get us close to predicting specific futures: the weather, economic downturn, when machines will fail, and how many miles an electric vehicle will give us are just some examples of such predictions. At the simplest level, what makes predictions work is having the right data fed into the right analysis model to determine the likelihood of something happening, thus enabling engineers to act upon it proactively. Sounds simple enough, but in practice, it’s anything but. From expensive recalls to battery malfunctions, EV batteries are under scrutiny and engineers are on the hook for it.

We are constantly blindsided by unfortunate battery-related events because as any engineer knows, the devil is in the details – the implementation details. So how do we overcome? By harnessing something else that lives in the details: data. With data working for us, automakers can optimize battery safety and performance, and ultimately, drive EV adoption.

Before Harnessing, Can You Get the Data?

There’s a great analogy heard from an EV engineering group manager at a large American OEM: Batteries are like humans, they have emotions… kind of. Just like with humans, batteries will perform at their best under the right set of conditions. For example, batteries “prefer” certain temperatures and charge/discharge rates to deliver energy, optimize their aging, and to operate safely. To determine those conditions, engineers measure multiple variables during test and use the results to learn from the battery and be able to control the environment when they’re deployed, with the goal of safely squeezing every mile from the battery pack.

To understand the scale at which these measurements need to happen and how much data we need to gather, let’s use an example. As we know, battery cells are the smallest energy-storing component that goes into a battery module, which then goes into a battery pack. Any battery pack will contain from dozens to hundreds of these cells depending on the type of vehicle (hybrid, plug-in hybrid, fully electric).

Let’s assume a mid-size Li-ion battery pack with 12 modules. To safely manage it and keep it in the right operational state, each module requires about eight sensors measuring voltage, for a total of 96 measurements per pack. Depending on the type of test, these measurements may need to be done as fast as every tenth of a second, for as long as a year, so we’re looking at over 30 billion voltage measurements in a year. Now add this to the millions of other measurements, data from the supply chain, test labs and operators, manufacturing, etc. we quickly get an understanding of 1) how much data we need to gather and 2) how varied the data sources are.

The end-to-end process to extract the insights from all this data, from these disparate sources, starts with automating the data ingestion, aggregation, contextualization, calculation of metadata, indexing, and tagging. The first thing to consider is how siloed the data may be, so an organization can decide how all data sources will be ingested into a centralized location.

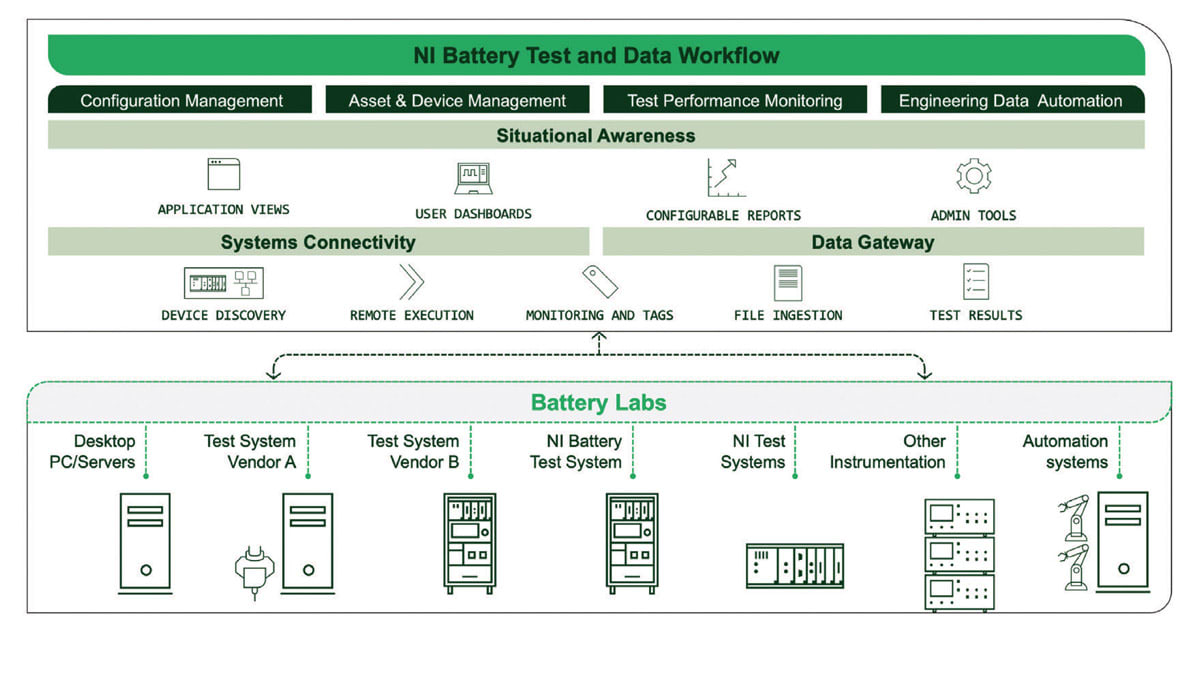

From experience, we’ve seen data stored in a multitude of places such as on deployed test stations, external memory devices (for example, USB stick from data collection at a remote, unconnected testing facility), locally on an engineer’s computer, on-premises databases, or in the cloud. Clearly, having data in multiple locations causes the analytics to identify lagging indicators which leads to reactive responses and limits visibility into how the test assets actually performed (Figure 1).

A practical way to extract siloed data is using Extract-Transform-Load (ETL) Pipelines, which is a method to collect, combine, clean, and translate the data into a common format that’s reliable and readable. These are different from data pipelines which are only used to transport the data. After going through the ETL pipeline, you’re no longer referencing, nor viewing the source file at all, thus it can be archived, and because of how the ETL pipelines transform the data, it can be queried as if it was in a database. This improved “query-ability” allows us to deepen searches and go beyond properties and metadata to query, for example, specific columns under specific parameters or above user-defined thresholds.

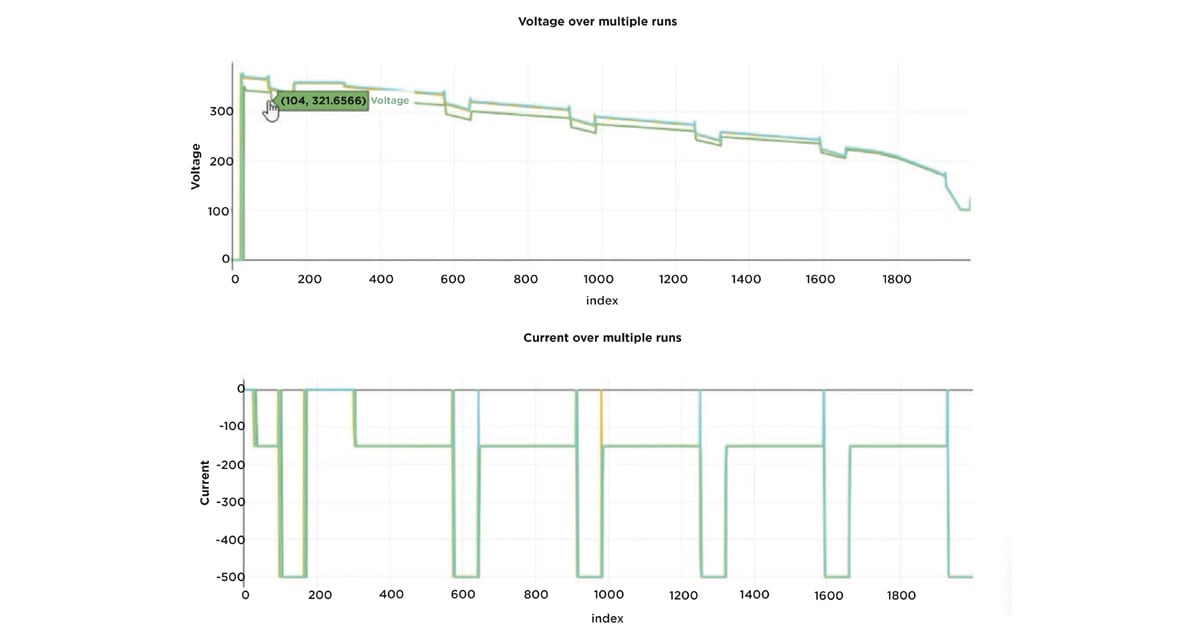

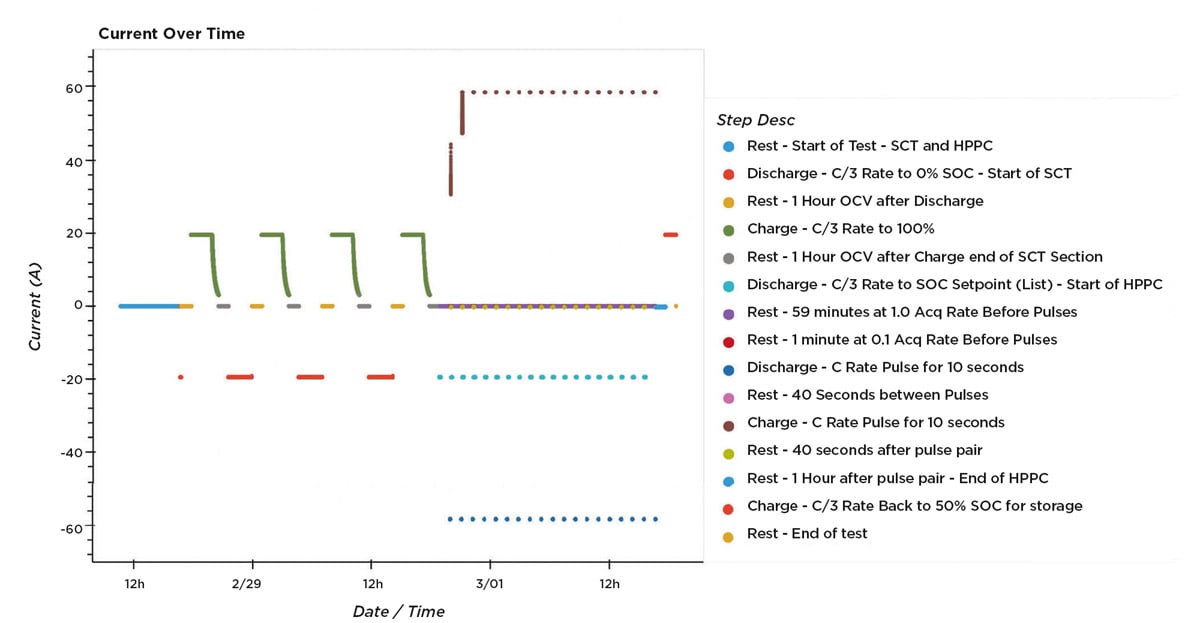

By breaking silos and using ETL pipelines, engineers are free to use tools like Jupyter Notebooks or Grafana to create and share documents, dashboards, analysis, and ultimately, to collaborate so they can make the decisions they need using the most relevant data, in a timely manner, regardless of format and source (Figures 2a and 2b).

Moving Toward Predictive Analytics

In the test engineering context, making the data available and usable to the right people is already a massive advantage to improve product performance. To be clear, “the right people,” does not always mean the test engineers themselves. It’s common for companies to use subject matter experts (SMEs) in physics, chemistry, data science, modeling, or manufacturing to make data-driven decisions. In many cases, these SMEs will have their preferred tools for analysis and programming, or no coding experience at all, so having the ability to query the data using natural language empowers them to do what they do best and add value to the whole process according to their area of expertise, without the hassle of being forced into certain tools or skills outside of their core know-how.

As these practices of sharing and leveraging data increase with scale, SME expertise can become scarce, thus pushing companies to also leverage automated analytics solutions. The advantage is that rather than having an SME directly looking for insights, you can have them training algorithms, working with machine learning (ML) experts, or creating statistical models that are then fed into software to automate the analytics.

Beyond this, there are analytics tools that turn automation and insights into action through a variety of ways. The main difference with using such tools is that they not only collect the metadata or pass/fail results, but they also collect all parametric data associated with individual components.

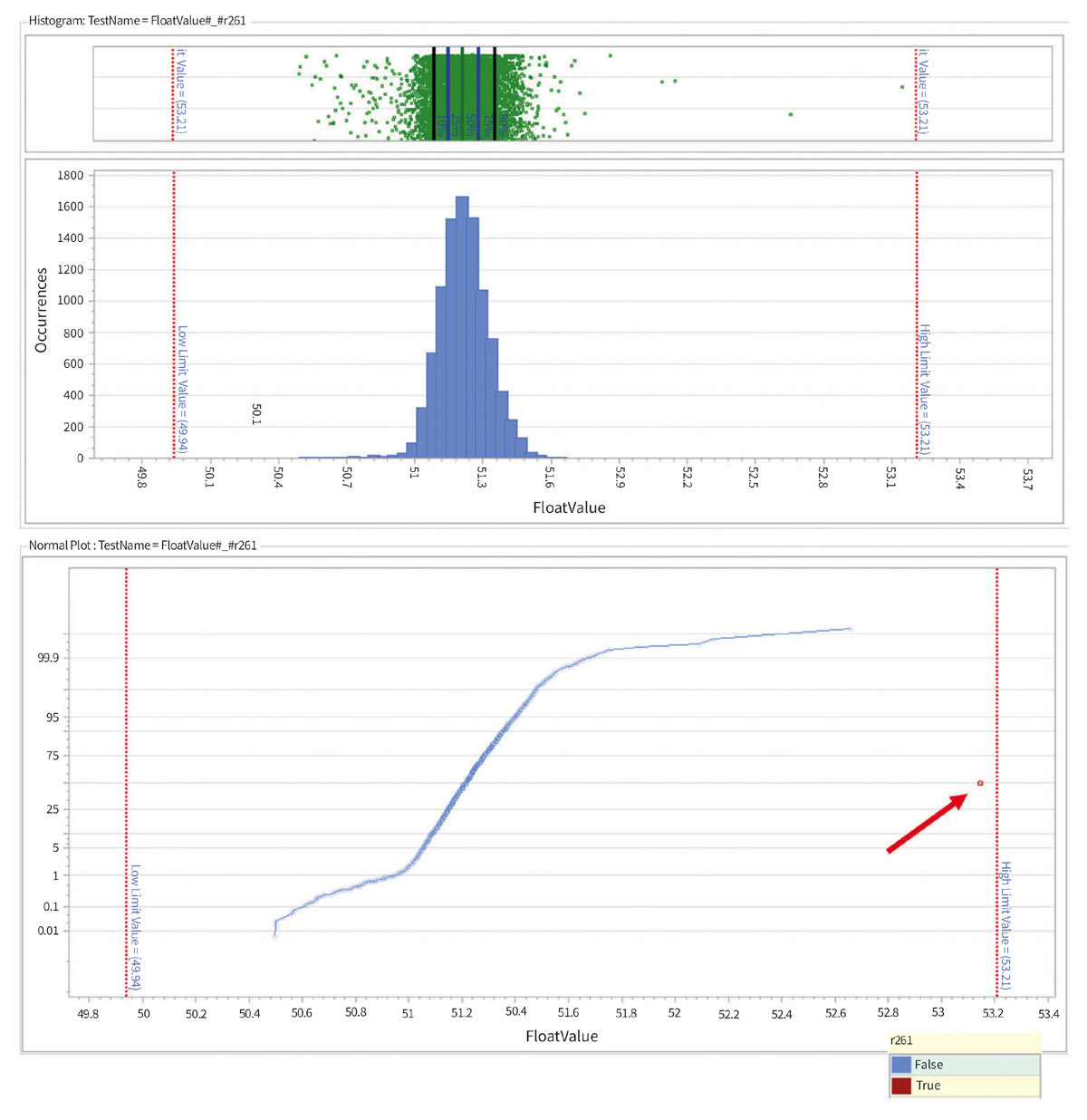

To understand what it means, let’s continue the example of making voltage measurements in battery cells. It wouldn’t be surprising that the measurement results follow a Gaussian curve within the defined voltage limits, resembling something like what we see on Figure 3. Now, let’s assume that there’s one measurement that is still within the limits, therefore passing the test, but it’s oddly far from the distribution (circled in red). If we’re doing outlier detection, we would be able to detect the odd measurement, but that doesn’t necessarily mean we know exactly which cell returned that measurement, or where it is in the process.

Analytics tools help provide that traceability so not only the outlier is detected, but also engineers can know exactly where it came from, where it is (which module or pack it went to), and take a proactive approach to avoid any issues, or more quickly root-cause when issues do happen.

The Vision – Stop Testing Backward

There are many challenges along the way of empowering engineers and SMEs to proactively get insights to predict and prevent issues, and every company decides on their own strategy through their digital transformation efforts. Regardless, we believe there are three key functions any data platform should perform:

- Secure the data without being overly restrictive, thus enabling collaboration without data breaches or unwanted exposures. Features like access control, encryption, and flexibility for storage (on-premise or cloud) are key areas to consider in this matter.

- Enable scalability by automating the end-to-end process from getting the data to getting the insights. It’s important to consider how critical it is to remain vendor-independent and what investments that will require to achieve the scalability needed.

- Provide the openness that ensures sustainability in the long term, by being compliant with existing IT infrastructure, and compatible with any number of visualization tools, databases, and programming languages, so the SMEs can focus on deriving the insights, rather than the implementation details.

Ultimately, the goal with using test data is to stop “testing backward.” At NI, we believe that it’s only by harnessing the power of data that we can elevate the role of test to become a holistic, forward-looking process that optimizes product performance.

This article was written by Stephanie Amrite, Principal Solutions Marketer; Product Analytics and Arturo Vargas, Chief Solution Marketer; EV Battery Test, both at NI (Austin, TX). For more information, visit here .