What's best for your application?

How does one select the best HD video camera and imaging sensor for professional video in applications such as life sciences, surgical imaging, microscopy, industrial imaging, and specialized point-of-view broadcasting where physical camera size is important and exceptional color video characteristics are critical?

Largely, these applications are based on dynamic, real-time, live viewing of the video image by people looking at a display and making decisions based on what they see coming from the camera. Is a 3-chip camera necessary or will a single chip camera suffice? What about sensor size, format, pixel size, and pixel density - how do these factors affect your image? This article will review and clarify key points to consider in camera selection to achieve the best outcome possible for your video application.

CCD vs. CMOS

Which is better - CCD (charge-coupled device) or CMOS (complementary metal-oxide semiconductor)? It depends, as there are advantages to both sensor technologies. For most applications CMOS provides the better choice but in others, CCD continues to hold its ground. Both use semiconductors to convert light into electrical signals.

In a CMOS sensor, each pixel has a photoreceptor performing its own charge-to-voltage conversion and typically includes amplifiers, noise-correction, and digitization circuits, enabling the sensor to output digital data directly. The pixels typically don’t store any charge; they simply read how much light is hitting that pixel at a particular moment and read out, progressively from top left to bottom right, line by line while the shutter is open. In a CCD sensor, light enters the photoreceptor and is stored as an electrical charge within the sensor, then converted to voltage, buffered, and sent out as an analog signal when the shutter is closed.

A strong advantage for CMOS technology is that it provides digital output and can be controlled at the pixel level in ways that are not possible with CCDs. This provides potentially huge advantages in specialized imaging where one might want to apply partial scanning or a particular control process to only a segment of the sensor. This capability is useful for control of cameras in different imaging modes for multi-spectral imaging or binning.

CCD advantages over CMOS are the sensors’ higher quantum efficiency (QE) and generally lower noise. The proportion of each pixel dedicated to light gathering vs. being masked for other functions, is also comparatively high. However, CCD cameras generally consume more power than CMOS, which can be a consideration for certain life science applications or for cameras which are battery-powered. Blooming is an unwanted CCD-specific artifact, which appears as a vertical smear line when a bright light or saturation occurs in the image.

Global vs. Rolling Shutter

Probably the most significant issue when deciding between CCD or CMOS is global vs. rolling shutter. Most CMOS sensors today use a rolling shutter which is always active and rolling through the pixels line by line from top to bottom. CCDs on the other hand store their electrical charges and read out when the shutter is closed and the pixel is reset for the next exposure, allowing the entire sensor area to be output simultaneously. When the shutter is open, the CCD receives light and accumulates charges again.

These shutter variations impact video imaging in several ways especially when there is rotational movement, horizontal motion, laser pulse or strobe light. CCDs manage these motions and pulsed light conditions rather well as the scene is viewed or exposed at one moment in time, like a snap shot. In addition the CCD sensor (global shutter) can be more easily triggered, enabling synchronous timing of the light or motion to the open shutter phase.

With CMOS (rolling shutter), it can be managed to an extent through a combination of fast shutter speeds and timing of the light source, however, not all rolling shutter artifacts can be overcome. There are CMOS sensors available implementing global shutter capabilities, but their format and video performance characteristics aren’t yet optimal for many life science requirements.

Pixel Density vs. Pixel Size

Pixel density and pixel size are often confused and misunderstood attributes of a video camera. We are influenced by the consumer products industry which has done a phenomenal job of conditioning us to believe that more pixels must be better. A 40 MP camera on a mobile device must be substantially better than an 8 MP camera, right?

While pixel density is a valuable attribute which can contribute to increased resolution, the pixel size will actually have greater influence on dynamic range, sensitivity and noise, especially in low-light situations. All things being equal, larger pixel size equals greater signal and improved video performance. Most camera manufacturers don’t disclose pixel size but a reasonable estimate can be calculated if you know the sensor size and pixel matrix.

Let’s consider two examples. That new GoPro® HERO3 that you just bought has a 1/2.3 in. sensor measuring 6.17 mm x 4.55 mm but packs 12 MP in a 4000 x 3000 pixel matrix. To calculate pixel size, simply divide the sensor width and length by the pixel matrix in the horizontal and vertical. This determines that the pixels are approximately 1.5 μm square. By contrast, a major microscopy manufacturer promotes one of its latest digital camera models as having 12.5 MP, which outputs 4080 x 3072 pixel matrix. Its sensor is 2/3 in. format which measures 8.8 mm x 6.6 mm, which would result in 2.1 μm square pixels. If you are confident that the stated pixel matrix is the actual or effective pixel matrix, then this comparison is complete.

If you can, always try to learn the actual or effective pixels on the sensor to more accurately determine pixel size. In this microscopy camera example, the specifications indicated the 12.5 MP is derived by pixel shifting. Pixel shifting is a technique used by many camera manufacturers to improve spatial resolution by offsetting the sensors mechanically, in the case of three-chip cameras, or electronically for single-chip cameras. When using pixel-shift techniques the actual number of pixels on the sensor can be lower than the stated output format. With a bit more review of the specifications it is revealed that the effective pixel matrix of the sensor is only 1360 x 1024, barely 1.4 MP, resulting in 6.4 μm square pixels.

What is both challenging and important in this comparison is getting the facts to determine pixel sizes, not the use of pixel shift, as that is acceptable practice in camera design. The pixel dimensions of the microscopy camera are quite large and more than 17 times larger than the GoPro. I hope my pathologist doesn’t try to use a GoPro on the microscope.

3-Chip vs. 1-Chip

So what about three-chip technology - does it still provide advantages and is it relevant with today’s high MP camera options?

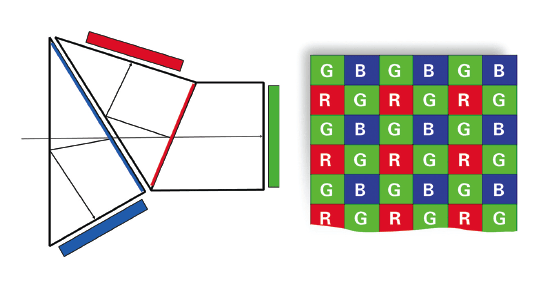

The principal behind three-chip cameras is using a prism to separate light into its component red, green, and blue wavelengths and using a dedicated sensor for each channel (Figure 1(left): Prism block). It effectively triples the sensor area and provides for precise control of each color channel. So right out of the gate, a three-chip camera provides improved sensitivity and color control.

Every manufacturer rates their three-chip cameras by the size and pixel matrix of the individual sensors and not the combined result. Therefore a 2.1MP HD three-chip camera has three 2.1 MP sensors. If the effective pixel matrix is full HD at 1920 x 1080, then the resulting pixel size is approximately 2.5 μm. While in this example the pixel size is still smaller than the microscopy camera discussed above, its size is still large enough to provide a good result while keeping the physical camera size small. In principal, any sensor size can be constructed into a three-chip configuration, although for most life science applications the best sensor size is 1/3 in. or 1/2 in. formats as these are large enough to achieve a good ratio between pixel density and pixel size while keeping the overall camera size small.

In the case of a single-chip sensor, its pixel matrix is covered with a color filter mask, typically a Bayer type which alternates green, red or blue filters placed directly over each pixel (Figure1 (right): Bayer filter). The human eye is most sensitive to visible light in the green wavelengths and the Bayer pattern attempts to approximate the sensitivity of the human eye by placing alternating rows of green and blue and green and red pixels. The resulting array of these filters is 50% of the pixels are green and 25% are blue or red. If the sensor was a full HD 2.1MP then roughly 1 million of the pixels will be green and 500,000 will be blue and 500,000 red.

If we compare two cameras that both use the same sensor format – 1/3 in. for example, both are full HD, one a single-chip Bayer, the other a three-chip, which one will be better? Pixel matrix for both cameras is the same, 1920 x 1080, sensor size is the same 1/3 in., so the pixel sizes are also the same (2.5 μm). Which will provide the better result?

The single-chip camera will only provide 1 million pixels of green data, whereas the three-chip camera will provide 2.1 MP of green pixel data. In addition, the three-chip camera will provide four times the pixel information for the red and blue channels. The end result is increased resolution and improved sensitivity, particularly in low-light applications.

While it may be possible to use a large, single-chip sensor to approximate the pixel distribution and pixel size of the three-chip design, mechanical and space constraints of many applications may not allow the use of such a large sensor format and increased camera size. Three-chip is able to achieve an ideal balance between very compact mechanical size and exceptional video performance characteristics. An advanced three-chip CMOS HD camera is shown in Figure 2.

Emerging video formats are dependent on the capabilities and continuous improvements in CMOS sensor technology including Ultra HD or Quad-Full HD 3840 x 2160 and the Digital Cinema Initiatives (DCI) 4K standard 4096 x 2160 and even Super Hi-Vision 8K 7680 x 4320. Cameras, displays, video compression techniques and image processing are quickly becoming available to provide improvements in resolution and increasingly immersive video content. Working with these new formats will challenge optics, storage, distribution, display, and image processing which are often at their limits handling full HD. We will have to wait and see if the additional data and resolution are worth the necessary upgrades throughout the imaging chain.

Summary

As we’ve seen, CMOS sensors outperform CCD in many respects, particularly as it applies to most surgical imaging, microscopy, machine vision, and broadcasting applications. However, there are a few specialized applications in astronomy, particle detection, and certain imaging with motion where CCD technology should be considered. In imaging tasks where CMOS is used in 3-chip cameras, one can fully realize the improved resolution, sensitivity, and color reproducibility which is unmatched by single-chip cameras. For typical full motion video imaging, CMOS technology continues to advance and will meet the requirements of emerging formats like 4K and advanced image processing functions which take advantage of the digital nature of CMOS.

This article was written by Paul Dempster, National Sales Manager, Toshiba Imaging Systems Division (Irvine, CA). For more information, contact Mr. Dempster at paul.