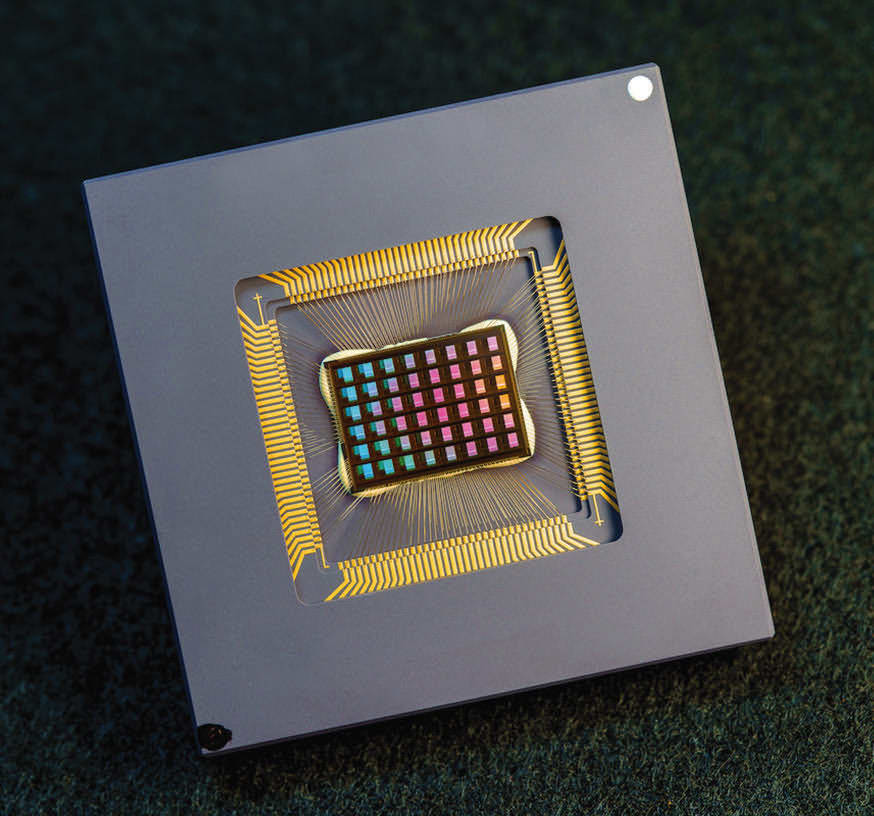

A team of UCSD researchers has designed and built a chip that runs computations directly in memory and can run a wide variety of AI applications — at a fraction of the energy consumed by computing platforms for generalpurpose AI computing.

The NeuRRAM neuromorphic chip brings AI one step closer to running on a broad range of edge devices, disconnected from the cloud, where they can perform sophisticated cognitive tasks anywhere and anytime sans a network connection to a centralized server.

The NeuRRAM chip is not only twice as energy-efficient as the state-of-the-art “compute-in-memory” chips, but it also delivers results that are just as accurate as conventional digital chips. Conventional AI platforms are a lot bulkier and typically are constrained to using large data servers operating in the cloud.

“The conventional wisdom is that the higher efficiency of compute-in-memory is at the cost of versatility, but our NeuRRAM chip obtains efficiency while not sacrificing versatility,” said Weier Wan, the paper’s first corresponding author and a recent Ph.D. graduate of Stanford University who worked on the chip while at UC San Diego, at which he was co-advised by Gert Cauwenberghs in the Department of Bioengineering.

By reducing power consumption needed for AI inference at the edge, this NeuRRAM chip could lead to more robust, smarter, and accessible edge devices and smarter manufacturing; it could also lead to better data privacy.

On AI chips, moving data from memory to computing units is one major bottleneck. “It’s the equivalent of doing an eight-hour commute for a two-hour work day,” Wan said.

To solve this issue, researchers used what is known as resistive random-access memory, a type of non-volatile memory that allows for computation directly within memory rather than in separate computing units.

Researchers measured the chip’s energy efficiency via energy-delay product (EDP). By this measure, the NeuRRAM chip achieves 1.6 to 2.3 times lower EDP (the lower, the better) and 7 to 13 times higher computational density than state-of-the-art chips.

The team ran various AI tasks on the chip: It achieved 99 percent accuracy on a hand-written digit recognition task; 85.7 percent on an image-classification task; and 84.7 percent on a Google-speech-command recognition task. In addition, the chip also achieved a 70 percent reduction in image-reconstruction error on an image-recovery task. These results are comparable to existing digital chips that perform computation under the same bit-precision, but with major savings in energy.

The NeuRRAM chip can be used for many different applications, including image recognition and reconstruction as well as voice recognition. The next steps include improving architectures and circuits, and scaling the design to more advanced technology nodes. Researchers also plan to tackle other applications, such as spiking neural networks.

For more information, contact Ioana Patringenaru at